28

MayCloud Computing Interview Questions and Answers

Cloud computing Interview Questions and Answers are designed to help candidates understand key concepts, practical applications, and real-world scenarios in cloud technology. Preparing these interview questions will not only boost your confident but also help you handle interview discussions with ease.

Let’s go through some of the most popular Cloud Computing interview questions and answers so you can walk into your interview with clarity, confidence, and a competitive edge! 65% of cloud-related jobs will require Azure Fundamentals knowledge. Start your journey with our Free Azure Fundamentals Course today!

Why Prepare for Cloud Computing Interview?

As demand for cloud solutions grows, so does the need for skilled professionals who can design, deploy, and manage cloud environments. Whether you’re preparing for a role as a Cloud Engineer, Cloud Architect, or Developer, interviewers often assess your understanding of core concepts, service models, deployment types, security practices, and real-world problem-solving skills. Preparing well for a cloud computing interview is essential because of the following points also:

- High Career Demand: Cloud roles such as Cloud Engineer, Architect, and DevOps Specialist are among the most sought-after and well-paid in the IT industry.

- Diverse Topics Covered: Interviews test not just cloud basics, but also real-world problem-solving in areas like scalability, cost optimization, networking, and security.

- Competitive Job Market: With many professionals entering the cloud domain, strong preparation helps you stand out.

- Practical Application: Employers value candidates who can apply theory to real scenarios, such as designing resilient architectures or handling cloud migrations with zero downtime.

Cloud Computing Interview Questions for Freshers

1. What is Cloud Computing?

Cloud computing is the delivery of computing services, such as servers, storage, databases, networking, software, and analytics, over the internet, or “the cloud,” instead of using local servers or personal devices, users access shared resources from providers like AWS, Azure, or GCP.

It allows you to access and store data, run applications, and use IT resources as needed, usually on a pay-as-you-go basis. This approach provides scalability, cost efficiency, flexibility, and global access, making it essential for modern businesses and individuals.

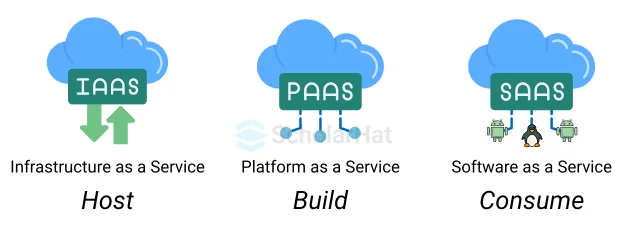

2. What are the main service models in cloud computing?

The three primary models of cloud computing are:

- IaaS (Infrastructure as a Service): IaaS provides virtualized computing resources (e.g., AWS services EC2).

- PaaS (Platform as a Service): PaaS offers a platform for app development (e.g., Google App Engine).

- SaaS (Software as a Service): SaaS delivers software over the internet (e.g., Microsoft Office 365).

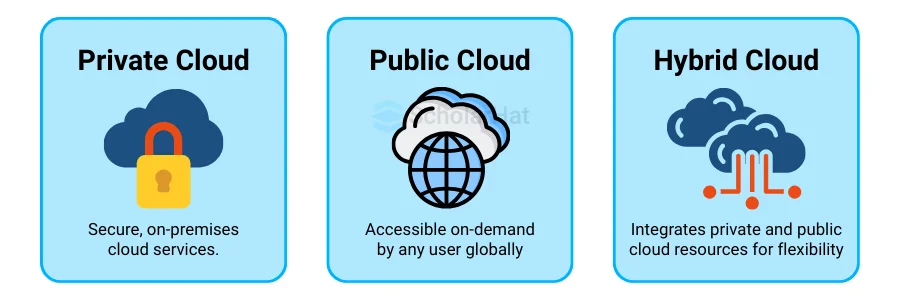

3. What are the deployment models in cloud computing?

- Public Cloud: They are owned by third-party providers (e.g., AWS).

- Private Cloud: They are dedicated to one organization (e.g., on-premises VMware).

- Hybrid Cloud: They combines both public and private (e.g., sensitive data in private, scalable apps in public).

- Multi-Cloud: They uses multiple public clouds for redundancy.

4. What is virtualization in cloud computing?

5. How does cloud computing works?

Cloud computing works by providing computing services, like servers, storage, databases, software, and networking, over the internet instead of depending on local hardware.

The process generally involves:

- Requesting resources through a web interface, CLI, or API.

- Provisioning through the provider’s orchestration software.

- Accessing and scaling based on usage demand.

- Metering and billing under the pay-as-you-go model.

6. What are the advantages of using cloud computing?

- Cost Efficiency: Pay-as-you-go model reduces upfront costs and maintenance expenses.

- Scalability & Elasticity: Easily scale resources up/down and adjust automatically to demand.

- Accessibility: Access resources globally from any device with an internet connection.

- Speed & Agility: Rapid resource deployment and faster application development.

- Reliability: High availability and robust disaster recovery with multi-region support.

7. What is the difference between scalability and elasticity?

- Scalability: The ability to handle growth in workload by adding resources either vertically (upgrading to a more powerful server) or horizontally (adding more servers).

- Elasticity: The ability to automatically scale resources up or down in real-time, based on workload demands.

8. What is a data center in the context of cloud computing?

9. How does cloud computing ensure data availability?

- Redundancy: Cloud providers replicate data across multiple storage devices and servers, ensuring that if one device fails, the data is still accessible from another.

- Geographic Distribution: Data and resources are spread across multiple geographical regions, often called availability zones or regions.

- Automated Failover Systems: Cloud platforms have automated systems that monitor the health of servers and networks. If a component fails, traffic is automatically redirected to a working component, minimizing downtime

- Backup and Disaster Recovery: Cloud providers regularly back up data and systems to ensure rapid recovery in case of data loss or system failures.

10. What is multi-tenancy in the cloud?

11. What is Edge Computing?

Edge computing is a distributed computing paradigm where data processing and storage are moved closer to the devices and systems that generate the data, rather than relying solely on centralized cloud or data centers.

It reduces latency, improves real-time processing, and minimizes bandwidth usage, making it ideal for applications like autonomous vehicles, smart cities, and industrial IoT.

12. What's the difference between Edge Computing and Cloud Computing ?

| Edge Computing | Cloud Computing |

| Edge Computing is a distributed computing architecture that brings computing and data storage closer to the source of data. | Cloud Computing is a model for delivering information technology services over the internet. |

| Processing is done at the edge of the network, near the device that generates the data. | Data Analysis and Processing are done at a central location, such as a data center. |

| Edge Computing is more expensive, as specialized hardware and software may be required at the edge. | Cloud Computing is less expensive, as users only pay for the resources they use. |

13. What is a virtual machine (VM)?

A virtual machine (VM) in cloud computing is a software-based, digital version of a physical computer, allowing users to run different operating systems and applications on a single physical server. In cloud computing, VMs are crucial for enabling a multi-tenant environment where multiple users can share server resources efficiently.

14. What is cloud storage, and how is it used?

- Backup/Recovery: It protects data from loss (e.g., AWS Backup).

- Data Sharing: Enables collaboration (e.g., Dropbox).

- App Hosting: It stores app data (e.g., Azure Blob Storage).

- Archiving: It provide long-term storage for compliance (e.g., AWS S3 Glacier).

15. Explain the concept of pay-as-you-go in cloud computing.

The pay-as-you-go model, also known as pay-per-use, is a core feature of cloud computing that allows users to access computing resources—such as compute power, storage, databases, or networking

- Cost Efficiency: Reduces waste by charging only for used resources, unlike over-provisioned on-premises servers.

- Agility: Enables rapid experimentation, testing, or deployment without financial risk.

- Predictable Budgeting: Tools like AWS Cost Explorer or Azure Cost Management help track usage and optimize spending.

16. What is a content delivery network (CDN)?

A Content Delivery Network (CDN) is a distributed network of servers strategically placed in multiple locations worldwide to deliver web content, such as images, videos, or web pages, to users faster and more reliably.

In the context of cloud computing, CDNs cache content at edge locations closer to users, reducing latency and improving performance.

- How it Works: CDNs store copies of static content (e.g., images, videos, scripts) on edge servers. When a user requests content, the CDN routes the request to the nearest edge server, minimizing data travel time.

- Examples: AWS CloudFront, Azure CDN, Google Cloud CDN.

17. How do you access cloud services from your local machine?

- Web Portals: Use browser-based consoles like AWS Management Console or Azure Portal for manual management.

- Command-Line Interfaces (CLI): You can use tools like AWS CLI (aws s3 ls) or Azure CLI automate tasks.

- APIs/SDKs: Programmatic access via SDKs (e.g., Boto3 for Python) for app integration. Example: s3.upload_file('file.txt', 'my-bucket', 'file.txt')

- RDP/SSH: Directly access VMs (e.g., SSH to AWS EC2: ssh -i key.pem ec2-user@ip).

18. What are microservices?

19. What is an API Gateway?

20. What is cloud bursting? When would you use it?

Cloud Interview Questions for Intermediate

21. What is cloud computing architecture?

Cloud computing architecture is the framework of components and their interactions that enable the delivery of cloud services, such as compute, storage, and networking, over the internet. The architecture typically includes:

- Frontend : Client-Side User interfaces for e.g., web portals like AWS Management Console, and for client devices.

- Backend: Cloud infrastructure includes servers, storage, databases that are managed by providers, including virtualization and security layers.

- Service Models: Consist of several service models for different purpose, IaaS (e.g., AWS EC2), PaaS (e.g., Google App Engine), SaaS (e.g., Microsoft 365).

- Deployment Models: Public, private, hybrid, or multi-cloud.

- Key Components: Hypervisors, management software, networking, and storage systems.

22. What is the difference between cloud and data center?

| Aspect | Cloud | Data Center |

| Definition | Cloud is a virtual resource that helps businesses to store, organize, and operate data efficiently. | A Data Center is a physical resource that helps businesses store, organize, and operate data efficiently. |

| Ownership | Owned and maintained by a cloud service provider (AWS, Azure, GCP, etc.). The user rents resources. | Owned and maintained by the organization that uses it. |

| Scalability | Highly scalable, instantly increase or decrease resources based on demand. | Limited scalability, scaling requires buying and installing new hardware, which is time-consuming. |

| Operate | Cloud is easy to operate and is considered a viable option. | Data Centers require experienced developers to operate and are considered not a viable option. |

| Cost | The maintenance cost is less than service providers maintain it. | The maintenance cost is less than service providers maintain it. |

23. What is hypervisor in cloud computing?

A hypervisor, also known as a Virtual Machine Monitor (VMM), is software or firmware that creates, manages, and runs virtual machines (VMs) in cloud computing environments. It acts as an intermediary between physical hardware and virtual machines, enabling multiple operating systems to share the same physical server by abstracting and allocating resources like CPU, memory, and storage.

24. What is "serverless computing," and what are its use cases?

Serverless computing is a cloud model where the provider fully manages the infrastructure, including servers, scaling, and maintenance, allowing developers to focus on writing and deploying code. Functions run in response to events, and you’re only charged for the compute time used. It’s event-driven, auto-scaling, and requires no server management.

Use cases include:

- API/backend: Create scalable RESTful APIs using services like AWS Lambda Functions, Azure Functions, or Google Cloud Functions. These APIs interact with databases, perform business logic, and return data to clients.

- Event-driven applications: Process real-time data from IoT devices or user actions. This can make functions run at certain times of day or in certain environments i.e. sending an email to users when it’s a certain temperature.

- Batch jobs: Execute scheduled tasks like report generation.

25. What is containerization, and how is it different from virtualization?

Containerization is a lightweight form of virtualization where applications and their dependencies are packaged together into containers. Whereas, Virtualization uses a hypervisor to run multiple virtual machines (VMs) on a single physical server.

| Aspect | Containerization | Virtualization |

| Architecture | Shares the host OS kernel; apps run in isolated containers. | Uses a hypervisor; each VM runs its own full OS. |

| Resource Usage | Lightweight, minimal CPU/RAM overhead | Heavy, needs full OS per VM, more reaourse usage |

| Portability | Highly portable across platforms. | Less portable, need OS compatibility |

| Use Cases | Microservices, CI/CD, rapid deployment. | Legacy app support, OS-specific workloads, heavy apps. |

Containerization prioritizes efficiency and portability, while virtualization focuses on full isolation and flexibility for diverse OS environments.

26. What is server virtualization?

Server virtualization is the process of creating multiple virtual machines (VMs) on a single physical server using a hypervisor, such as VMware or Hyper-V. Each VM runs its own operating system and applications, fully isolated from others, effectively dividing the server’s resources like CPU, memory, and storage.

27. Explain the concept of serverless computing.

Serverless computing is a cloud model where the cloud provider manages all infrastructure, including servers, scaling, and maintenance, so developers can focus solely on writing and deploying code. Applications are broken into functions that execute in response to events, like HTTP requests or database changes, and scale automatically with demand.

- Event-Driven Execution: Its functions or services are triggered by events such as API requests, database changes, or file uploads.

- Automatic Scaling: Resources scale up or down automatically based on workload.

- Pay-as-You-Go Model: You pay only for the execution time and resources used, not for idle capacity.

- No Server Management: The cloud provider handles all infrastructure tasks like OS updates, security patches, and scaling.

28. How does cloud computing ensure disaster recovery?

- Data Replication: Storing backups across multiple geographic regions to protect against site failures.

- Automated Backups: Regularly scheduled snapshots or incremental backups to ensure data integrity.

- Failover Systems: Switching to standby resources in another region during outages.

- Scalable Infrastructure: Quickly provisioning resources to restore services.

- DR-as-a-Service: Platforms like AWS Elastic Disaster Recovery or Azure Site Recovery automate recovery processes.

29. What is cloud orchestration, and why is it important?

Cloud orchestration is the automated management and coordination of cloud resources, services, and workflows to streamline operations across complex, distributed environments. It involves tools like Kubernetes, Terraform, or AWS CloudFormation to automate tasks like provisioning servers, configuring networks, and deploying applications.

- Efficiency: Reduces manual effort, speeding up deployment and management.

- Consistency: Ensures uniform configurations across environments, minimizing errors.

- Scalability: Automates resource scaling to handle varying workloads.

- Multi-Cloud Management: Coordinates resources across different cloud providers.

30. How do you secure data in the cloud?

Securing data in the cloud involves multiple layers of protection:

- Encryption: Encrypt data at rest (e.g., AES-256) and in transit (e.g., TLS/SSL) to prevent unauthorized access.

- Access Control: Use IAM policies to restrict access to authorized users and roles only.

- Network Security: Deploy firewalls, VPCs, and private subnets to isolate resources.

- Data Backups: Regularly back up data with versioning and store copies in multiple regions.

- Monitoring and Auditing: Enable logging (e.g., AWS CloudTrail) and use tools to detect and respond to threats.

- Compliance: Adhere to standards like GDPR or HIPAA for regulatory requirements.

31. What are IAM (Identity and Access Management) policies?

32. What is a VPC (Virtual Private Cloud)?

A Virtual Private Cloud (VPC) is an isolated, virtual network within a cloud provider’s infrastructure where you can deploy resources like servers and databases. It allows you to define IP ranges, subnets, and routing rules, providing control over network security and connectivity.

33. How does caching improve cloud performance?

Caching improves cloud performance by storing frequently accessed data in a high-speed storage layer. This eliminates the need to repeatedly fetch data from slower storage or recompute results.

- Reduced latency: Data retrieval is much faster from cache than from a database.

- Lower load on backend systems: Decreases database queries and API calls.

- Improved scalability: Systems can handle more users without performance degradation.

34. Explain cloud cost optimization strategies.

Cloud cost optimization means reducing unnecessary spending while maintaining performance. Cloud cost optimization strategies includes:

- Right-sizing resources: Choose instance sizes that match actual usage rather than over-provisioning.

- Use reserved or spot instances: Commit to long-term reserved capacity for predictable workloads, or use cheaper spot instances for flexible tasks.

- Auto-scaling: Automatically increase or decrease resources based on demand to avoid paying for idle capacity.

Cloud Computing Advanced Interview Questions

35. How would you design a highly available cloud architecture?

To design a highly available cloud architecture, you should focus on eliminating single points of failure, distributing workloads across multiple locations, and implementing automated failover mechanisms. This involves redundancy, monitoring, and automated scaling to ensure your application can withstand failures and maintain uptime.

Strategies for Designing Highly Available Cloud Architecture:

- Eliminate Single Points of Failure: Deploy multiple instances across Availability Zones or regions. Use load balancers and set up active-active or active-passive configurations. Ensure redundancy in networks, databases, and storage to keep services running if one part fails.

- Implement Automated Failover: Set up health checks, autoscaling, and automatic traffic redirection to healthy instances or backup sites. This minimizes downtime during failures or traffic surges.

- Continuous Monitoring and Testing: Use monitoring tools and alerts to catch issues early. Regularly test failover systems. Apply chaos engineering to identify and fix weaknesses before they lead to outages.

- Data Protection and Recovery : Back up and replicate data regularly across locations. Keep a detailed disaster recovery plan to restore operations quickly during major outages.

- Consider Serverless and Managed Services : Use serverless functions and managed cloud services like databases or queues. This reduces the complexity of managing infrastructure and improves reliability.

- Design for Failure: Build systems that can handle failures gracefully and isolate problems. This prevents localized issues from affecting the entire application.

By implementing these strategies, you can build a highly available cloud architecture that can withstand various types of failures and maintain uptime, ensuring a positive user experience

36. Explain the concept of multi-cloud strategy.

- An e-commerce company might run its website frontend on AWS, analytics pipelines on Google Cloud, and internal collaboration tools on Azure.

37. What Is Cloud Security Compliance?

Cloud security compliance is a process consisting of various rules, best practices, and policies that an organization should follow to secure data in its cloud environments and adhere to applicable authorities and compliance standards like HIPAA, GDPR, etc. Due to the growing number of cybersecurity attacks and data privacy risks, regulatory bodies and law enforcement agencies have framed these laws, regulations, and standards. This helps organizations of all sizes, shapes, and industries keep business and customer data private and secure. These standards are even more important for highly regulated sectors, such as finance, government, healthcare, military, etc., where data is highly confidential.

By complying with cloud security standards, you can uphold your reputation in the industry before your customers, stakeholders, partners, and third parties, and maintain trust. This also enables you to prevent security risks like data breaches, permission escalations, and more.

38. How would you implement CI/CD in a cloud environment?

Implementing CI/CD (Continuous Integration/Continuous Deployment) in a cloud environment involves setting up an automated workflow that builds, tests, and deploys applications with minimal manual intervention. The steps are as follows:

- Version Control: It stores source code in a repository like GitHub, GitLab, or Bitbucket.

- Continuous Integration (CI): It uses cloud-based CI tools like AWS CodeBuild, Azure DevOps Pipelines, or GitHub Actions. Automate build and testing on every code commit. Integrate unit tests, integration tests, and security scans in the pipeline.

- Artifact Management: Store build artifacts in cloud storage or container registries like Amazon ECR, Azure Container Registry, or Docker Hub.

- Continuous Deployment (CD): Use services like AWS CodeDeploy, Azure Web Apps, or Google Cloud Run to automatically deploy to staging and production environments. Implement blue-green or canary deployments to reduce downtime and risk.

- Infrastructure as Code (IaC): Provision cloud resources with Terraform, AWS CloudFormation, or Azure Resource Manager templates to ensure consistency.

- Monitoring & Rollback: Integrate cloud monitoring tools (AWS CloudWatch, Azure Monitor) and set up automated rollback in case of deployment failures.

39. How do you monitor and log cloud resources for security and performance?

40. Describe a real-world scenario where you optimized cloud costs significantly.

Real-World Cloud Cost Optimization Scenario: While managing a SaaS platform hosted on AWS, monthly cloud costs had unexpectedly grown by nearly 40% over three months. On investigation, we found:

- Overprovisioned EC2 Instances: Many were running at <15% CPU utilization.

- Unutilized Resources: Old EBS volumes and idle Elastic IPs from retired environments.

- Data Transfer Costs: Large amounts of inter-region traffic between services.

Optimization Steps Taken:

- Right-Sizing & Autoscaling: Downgraded underutilized instances and enabled autoscaling to match real demand.

- Storage Cleanup: Deleted unused EBS volumes, snapshots, and orphaned resources.

- Reserved & Spot Instances: Migrated stable workloads to reserved instances and batch jobs to spot instances.

- Data Transfer Optimization: Collocated services in the same region to cut transfer costs.

- Monitoring & Alerts: Set AWS Budgets alerts and integrated CloudWatch metrics for ongoing tracking.

Outcome:

- Achieved ~35% monthly cost reduction without sacrificing performance.

- Improved scalability and eliminated waste, creating a cost-aware culture in the team.

41. How do you ensure zero downtime during cloud migrations?

- Blue-Green Deployment: Run two identical environments (blue: current, green: new). Deploy changes to green, test thoroughly, then switch traffic via DNS or load balancer with no downtime.

- Incremental/Canary Migration: Move a small percentage of users or workloads to the new environment first. Monitor performance and expand gradually.

- Data Synchronization: Use continuous replication tools (e.g., AWS DMS, Azure Database Migration Service) so the target database stays in sync until the final cutover.

- Load Balancing & Traffic Shaping: Use global load balancers to gradually redirect traffic, ensuring both environments can handle requests during the transition

42. What is edge computing, and how does it integrate with the cloud?

- Hybrid Processing: Time-sensitive tasks (e.g., real-time analytics, anomaly detection) are handled at the edge, while heavy processing, storage, and machine learning training happen in the cloud.

- Data Filtering & Aggregation: Edge devices preprocess raw data, sending only relevant results to the cloud, reducing costs and network load.

- Continuous Synchronization: The cloud acts as a central hub for managing, updating, and orchestrating edge devices, ensuring consistency and security.

- Scalability & AI Models: Cloud services can deploy AI models to edge devices and collect feedback for further training, creating a loop of improvement.

43. What are emerging trends in cloud computing?

Emerging Trends in Cloud Computing are:

- Generative AI Integration: Cloud providers are embedding GenAI capabilities, enabling businesses to create AI-driven applications without the burden of managing large-scale infrastructure.

- Hybrid & Multi-Cloud Adoption: Organizations leverage multiple cloud platforms to maximize flexibility, avoid vendor lock-in, and strengthen system resilience.

- Edge-Cloud Convergence with 5G: Edge computing handles real-time, low-latency processing, while the cloud manages large-scale analytics and AI model training, enhanced by 5G networks.

- Green Cloud & Sustainability: Cloud providers are focusing on energy-efficient, carbon-neutral operations to meet environmental and compliance goals.

44. What are the benefits of Cloud bursting?

- On-Demand Scalability: Handle sudden traffic surges by temporarily using public cloud resources, avoiding service slowdowns or downtime.

- Cost Efficiency: Pay only for extra resources during peak periods, avoiding the cost of permanently overprovisioning infrastructure.

- Performance Optimization: Maintain consistent application performance even during high-load events.

- Business Continuity: Reduce the risk of service disruptions by having an overflow capacity in the public cloud.

- Resource Flexibility: Leverage the best of both worlds, security of private cloud and scalability of public cloud.

45. What are Cloud Service Models (IaaS, PaaS, SaaS) and give one example of each.

IaaS (Infrastructure as a Service):

- It provides virtualized computing resources like servers, storage, and networking over the internet.

- Example: Amazon EC2, Microsoft Azure Virtual Machines, Google Compute Engine.

- It offers a platform with development tools, runtime environment, and infrastructure so developers can build, deploy, and manage applications without worrying about servers.

- Example: Google App Engine, Microsoft Azure App Service, AWS Elastic Beanstalk.

- Delivers ready-to-use software applications over the internet, accessible via browsers or apps, with no need for installation or maintenance.

- Example: Gmail, Salesforce, Microsoft 365.

46. What are "cloud-native" applications, and what is their architecture

47. How can you design and implement a hybrid cloud strategy?

- Assessment of workloads: Identify which workloads are better suited for private or public clouds.

- Integration: Use tools like API gateways or service mesh for seamless communication between environments.

- Security: Implement consistent security policies across both environments.

- Orchestration: Use platforms like Anthos or Azure Arc to manage hybrid deployments effectively.

A well-designed hybrid cloud offers scalability, flexibility, and optimized cost.

48. Can you discuss the role of automation and DevOps in cloud management?

- Automation helps reduce manual intervention by using tools like Terraform, Ansible, or AWS CloudFormation to provision infrastructure, configure services, and manage repetitive tasks, improving consistency and reducing human error.

- DevOps integrates development and operations through CI/CD pipelines, enabling faster and more reliable releases in cloud environments. It ensures seamless collaboration, continuous monitoring, and rapid feedback loops.

49. What is the complete name and application of ‘Eucalyptus’ in Cloud Computing?

50. What are the Types of Cloud Computing Security Controls?

- Deterrent Controls : Deterrent controls are designed to block nefarious attacks on a cloud system. These come in handy when there are insider attackers.

- Preventive Controls : Preventive controls make the system resilient to attacks by eliminating vulnerabilities in it.

- Detective Controls : It identifies and reacts to security threats and control. Some examples of detective control software are Intrusion detection software and network security monitoring tools.

- Corrective Controls : In the event of a security attack these controls are activated. They limit the damage caused by the attack.

Conclusion

Cloud computing continues to reshape the way businesses operate, making it one of the most in-demand fields for professionals today. Preparing for interview questions not only strengthens your technical knowledge but also boosts your confidence in explaining concepts clearly.

Cloud developers with Azure skills earn 30% more on average. Don’t miss out—join our Azure Developer Associate course today!

FAQs

- Cloud fundamentals and definitions

- Key differences between service models and deployment models

- Hands-on practice with at least one cloud platform (AWS, Azure, or GCP)

- Common tools like Docker, Kubernetes, and Terraform

- Security concepts (identity, encryption, compliance)

Take our Azure skill challenge to evaluate yourself!

In less than 5 minutes, with our skill challenge, you can identify your knowledge gaps and strengths in a given skill.

LEAD-ACE: EdTech Platform

LEAD-ACE: EdTech Platform